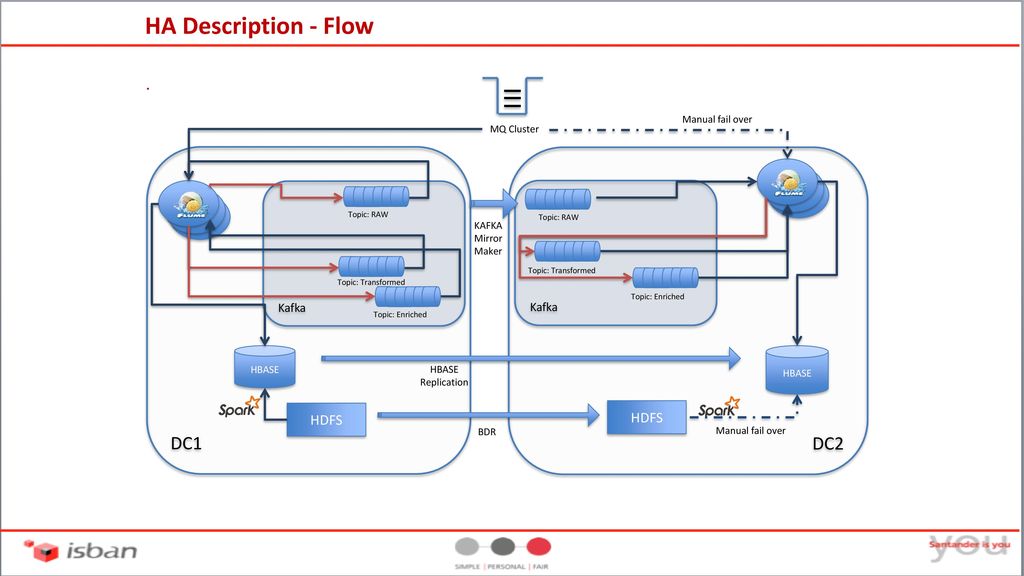

Cross-Cluster Search:Ĭross-Cluster Search has recently started receiving support. Every time when a curator restores the data, it makes sure that only incremental data restores at other end and that is available for search/ DR case.

One can configure curator to take a snapshot in the interval from DC1 and restore continuously/timely at DC2.

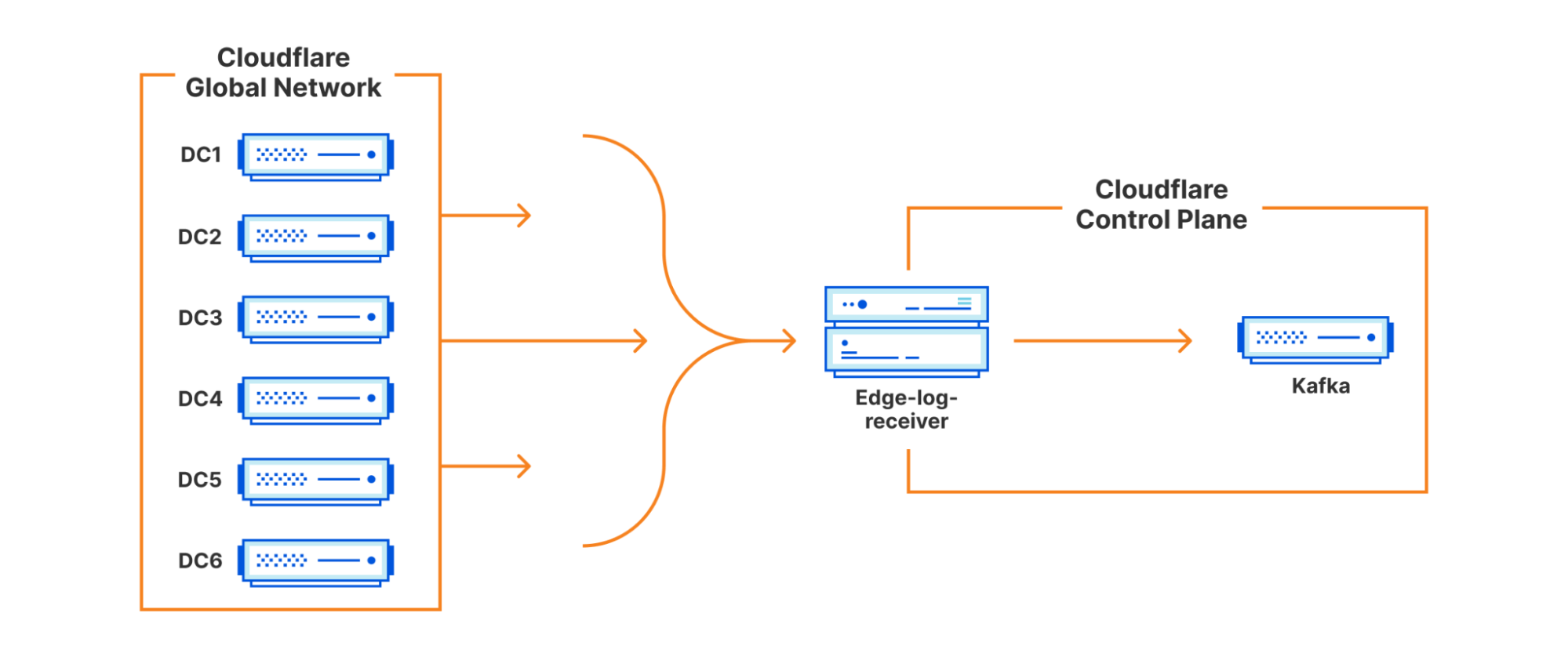

If someone really does not want “Active-Active” cluster at both DCs then one can use curator tool to do a continuous or timely snapshot and restore at another end. In case of network down/lost between DCs, it will restore and continue where it left and continue indexing data into local ES cluster. So, indexing of document from relevant queue happens to local ES cluster only. So, each DC will have its own ES cluster. From these message queues, local Logstash processes the data and sends it to local Elastic Search cluster. Beats can send data to message queue which can replicate at both DCs. One can configure Elastic Stack with messaging queue like Kafka/Redis MQ etc. Now, let’s look at the possible solution to achieve cross-cluster in Elastic Stack. This necessitates larger or more powerful clusters to ensure enough CPU and IOPS to maintain acceptable performance during such events.

#Using kafka tool create dc1 to dc2 full#

When the link is restored, these nodes will also be pushing data and documents across the network while still handling the full indexing and request load. Due to this DC2 may start indexing new data which is inconsistence with DC1. Even in Elastic Stack which uses Zen protocol for node availability due to network availability, there are chances that it may create an issue. This master electing process starts because DC2 eligible master node is not able to ping the existing master node in DC1 due to network reliability. In above eventuality, assume that DC1 to DC2 network is down for few milliseconds and Master node1 is currently acting as elected master in DC1, then DC2 eligible master may start electing a new master within DC2. To sync up this, one needs to sync replica again with primary to provide a consistent result. In case DCs loose network connectivity or get isolated for few milliseconds, it is likely that the remote shard may go missing and comes back in a disconnected state. But if there is latency in the network then indexing is slow and its missing shard (Secondary Replica) is also a very common problem. Elastic Search indexes the data first into primary shards and then replicates to the replica. High network latency may slow the indexing activity in Elastic Search. Latency is very a common problem in WAN network. But Elastic Search is built to be resilient to networking disconnects and that resiliency is intended to handle the exception and not the norm. Most of the large organizations have dedicated WAN links with very good speed and latency. Network disruption is very common over WAN, especially if DCs/Servers are separately located, physically. This architecture creates some challenges as mentioned below: 1. In the above-given solution, all the nodes in ES are spread across two DC. Typically, architecture and design solution in Elastic Stack is considered in a single data center solution and all the nodes of Elastic Search are in single DC. But there are some limitations while you are using cross-cluster DC with Elastic Stack. Recently, I came across a situation where a large one organization is building the ELK stack (Elastic Stack) across the data center, the architecture of which is as belowIf you are a System Admin or a DBA, there is nothing wrong in above architecture to achieve DC/DR deployment.